So if your reading this you most likely have needed to automatically mount a remote share via ssh/nfs automatically to use as a local folder. This is super handy for doing daily backups or accessing remote backups as a local mount point.

What is autofs?

Autofs is a service daemon that automatically mounts and remounts any remote sshfs , NFS, and other type shares for you on demand. Whenever the mount point is accessed it remounts the remote share if not already mounted. This is super efficient and way more efficient then doing custom fstab mounts which can be unreliable if your machine experiences network hiccups and fails to reestablish. You can read more about the nitty gritty on the man page here.

Oct 17, 2019 Follow the below steps to configure Autofs Step: 1 Requires Packages. Before we start the configuration of Autofs we have to install required packages. Step: 2 Configure /etc/auto.master. Here we are going to use NFS exported shares with Autofs to automatically mount it. Jul 25, 2020 Autofs is used to automatically mount any filesystem on demand as and when you access them and not only it will mount automatically but it can automatically unmount any filesystem when not in used for a particular predefined timeout value. Why should I use Autofs?

How to install aufos

This should cover both Centos and Debian/Ubuntu/Mint Linux based installations. The below guide covers how I setup a few mount points over sshfs via autofs.

Install autofs first via sudo or root.

Debian/Ubuntu/Mint Linux

Centos/RHEL/ rpm based

Once installed we setup our default base mount for any shares were going to add.

In my case I wanted all added shares to show up under the “/mnt” as a subfolder.

So to configure this I added the below line to the bottom of the file located here /etc/auto.master . Please Note: If this is going be run and mounted under a sudo user the uid and gid aka userid and groupid will need to be adjusted to match yours. The below line is assuming this is going to be mounted as the root user.

This would be done by using nano or vi to open and edit the file. I’m a big fan of nano so these commands have that as default. If you prefer vi you probably already know how to do this and don’t need any handholding. /end rant lol

Now that we have the base default path setup we can focus on adding the mount points. If this is for a remote server ssh/sshfs based path then we highly recommend ensuring you already have passwordless key base authentication setup between the machines. This is highly recommended so there are no passwd prompts or passwds saved to a text file or command locally for security purposes. If that is not already done please see a tutorial like this one to get that setup first. Please ensure when generating the keypair the passwd is empty meaning hit enter twice when prompted for the password vs specifying one and this would be done via the machine thats going to access the remote share.

With that now setup we can create the mount points.

In my case I wanted to mount 2 remote sshfs based folders to access and store backups.

Folder paths:

morgan

rsyncnet

morgan

rsyncnet

These would end up becoming the below paths once mounted.

So first we would need to craft the sshfs mount string needed for them both. See below examples. You would replace the name in front and the “Username@HostnameorIP:/remote/path” section with your sshfs connection information.

Once you have the mount strings created we need to add them to the “/etc/auto.sshfs ” file. So lets create or edit this file and add the line/s you created above. Were going to use nano for that via the root or sudo user and then exit and save.

Now if you already had these paths mounted manually via fstab you will want to unmount them before proceeding.

Now we need to reload autofs service so it sees the configuration changes we made to the files /etc/auto.master and /etc/auto.sshfs

Now we need to restart autofs .

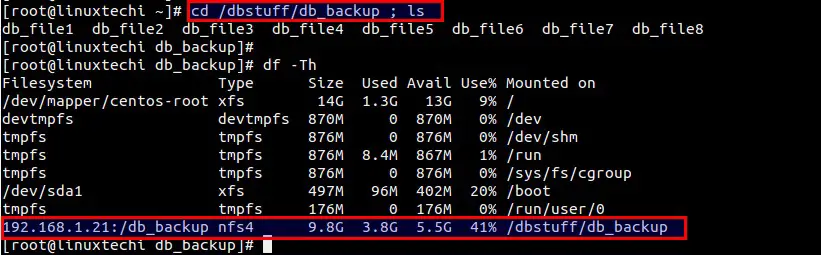

Now we can test if its working by doing a “df -h” and seeing if the mounts are currently there. If there not showing we can test the automount by changing into the mount point or trying to access it to kickstart it. In my case were testing with the first mount point /mnt/morgan

Once you try to access it might hang for a sec the first time while it connects then you should see a listing of any files in that path. Then to see if its mounted you can do another “df -h” to see current mount points. It should look something like this.

Now you have an automatic remote share mounted you can copy from or too to access or store backups etc. As its a now a local path its much easier to do an rsync or DB dump to this mount location and it be stored offsite.

We hope you enjoyed this tutorial and it saves you alot of frustration working with remote shares. If you have multiple servers which all need to add one remote backup server you can easily reuse the specific share line from the file /etc/auto.sshfs after installing autofs and configuring ssh keybased connections on the server.

Special shoutout to the people behind the guides linked below for inspiration. If you still have questions. The below links also have more detailed stuff and edge use stuff I might not have covered like NFS shares which are done similarly but have some quirks.

https://wiki.archlinux.org/index.php/Autofs

https://linux.die.net/man/5/autofs

https://help.ubuntu.com/community/Autofs

https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Linux/4/html/System_Administration_Guide/Mounting_NFS_File_Systems-Mounting_NFS_File_Systems_using_autofs.html

https://wiki.archlinux.org/index.php/Autofs

https://linux.die.net/man/5/autofs

https://help.ubuntu.com/community/Autofs

https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Linux/4/html/System_Administration_Guide/Mounting_NFS_File_Systems-Mounting_NFS_File_Systems_using_autofs.html

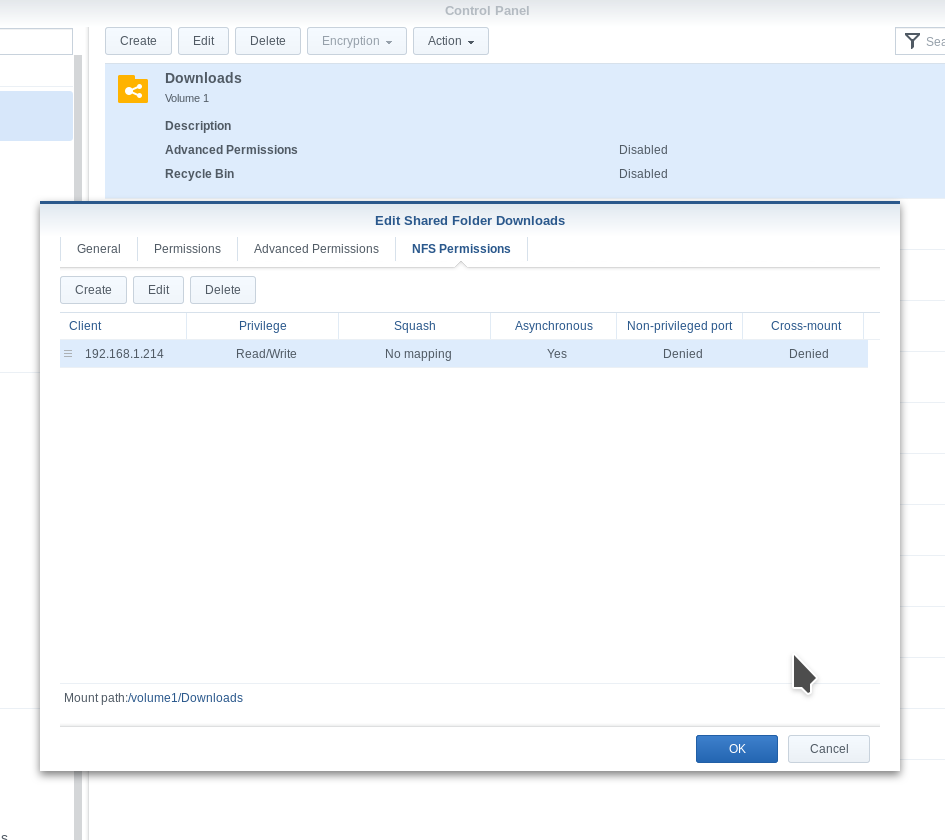

Automount/autofs is a Linux daemon which allows for behind the scenes mounting and unmounting of NFS exported directories. Basically with autofs NFS shares will be automatically mounted when a user or system attempts to access their resources and disconnected after a period of inactivity. This minimizes the number of active NFS mounts and is generally transparent to users.

First we’ll install autofs and include the NFS client:

# sudo apt-get -y install autofs nfs-commonNow we need to define autofs maps, which are basically configuration files which will tell autofs what types of NFS mounts to define. With autofs there are two types of maps, direct and indirect. With direct maps we define a list of filesystems to mount that will not share a common higher level directory on the client. With indirect maps they share a common directory hierarchy, and a bit less overhead is required. The advantage with direct maps is that if the user runs a common such as “ls” on the directory structure above the directory will show up, whereas with indirect maps they have to actually access the contents of the directory itself. This can cause some confusion for users because running “ls” on a directory containing indirect mounts will not show the autofs directories until after the contents within have been accessed. Indirect maps may also not be available by browsing the directory structure with a GUI file manager, you’ll need to specifically type in the path to get to it. In this example I’ll show the use of both types of maps.

Master Map

First we need to define a master map, which basically will tell us what indirect/direct maps we want to use and the appropriate config files to read. Edit the “auto.master” file and append this content:

# sudo vi /etc/auto.master# directory map

/server1 /etc/auto.server1

/- /etc/auto.directThe first entry is an indirect map, all of the mounts will be created under /server1 directory and the configuration will be read from “auto.server1”. With direct maps we use a special character “/-” and will read the config from “auto.direct”.

Indirect Maps

Time to set up our indirect maps:

# sudo vi /etc/auto.server1apps -ro server1:/nfs/apps

files -fstype=nfs4 server1:/nfs/files

The first column represents the subdirectory to be created under “/server1”. The second shows the host and NFS export, with apps mounted as read only. Notice with files that we specify NFSv4, obviously the NFS share must be compatible with NFSv4. Not specifying an “fstype” should revert autofs to using NFSv3.

Direct Maps Atlas for google maps 1 0 download free.

Now we’ll do a direct map:

Centos 7 Autofs

# sudo vi /etc/auto.direct/mnt/data server1:/nfs/data Adobe zii patcher 4 3 8 cr2.Setting up a direct map is basically like configuring an NFS mount in the “/etc/fstab” file. Include the full directory name from /, and include the host and NFS export names. The options that we used with the indirect maps above can also be used on direct map mounts if desired.

Autofs Ubuntu 16

While I don’t believe it is explicitly required, I have had difficulty accessing NFS shares unless the directory for the direct map is created manually before running autofs:

# sudo mkdir /mnt/dataNow we need to configure automount/autofs to start when the system starts:

# sudo update-rc.d autofs defaultsI am using the “update-rc.d” command, which has very similar functionality to the “chkconfig” utility in the Red Hat world. It will create links in the various runlevel directories to the daemon’s initialization script. Autofs is the daemon name, and using the defaults options tells the system to start autofs in runlevel 2-5, and stop in 0, 1, and 6. Most of the startup runlevels are not used with Debian/Ubuntu, runlevel 2 is the one that matters most to us.

Now restart the autofs daemon to pickup the new configuration:

Autofs Ubuntu Linux

# sudo service autofs restartAutofs Ubuntu App

Browse to the newly established autofs mounts or type in the full path where you mounted the NFS export if using indirect maps. The files on your NFS host should now be available on your client!